This post did not contain any content.

-

This post did not contain any content.

@cR0w more like idiots using AI is a greater risk

-

This post did not contain any content.

@cR0w attackers only need to succeed once. Defenders need to succeed every time.

When using a broad system with lots of capabilities, but also stochastic and unpredictable, guess for which side it's the most useful?

-

This post did not contain any content.

@cR0w where does "execs using AI" rank in this?

-

@cR0w and third parties using AI is worse than both put together

@bakachu Maybe. It depends on the org's reliance on said third parties.

-

@bakachu Maybe. It depends on the org's reliance on said third parties.

@cR0w true, i speak under the influence of bias and exhaustion

-

@cR0w Why?

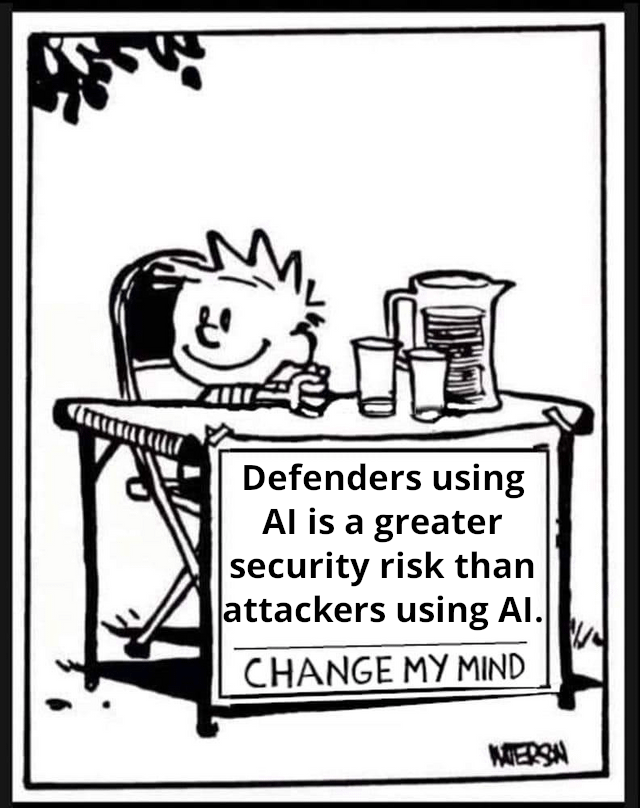

not trying to change your mind, but interested to read the thought process@beyondmachines1 AI tools used by attackers has not materially impacted capabilities beyond scope and scale, but that does not change the likelihood of occurrence or the severity of impact to orgs who were already modeling their risk based on the state of the art threats, which should be everyone at this point. Defenders relying on nondeterministic and unaccountable systems are inevitable going to miss things due to the way existing AI tools work.

-

@cR0w i don't think anybody ever denied this, actually this is the common opinion

@kirby It may be common on fedi but it certainly isn't common in my experience in industry. I'm surrounded by AI-enabled attacker pearl clutchers and tech bros promising to save the world with their AI SOC magic beans.

-

@cR0w more like idiots using AI is a greater risk

@alerikaisattera Is there another term for people using AI if they aren't required to?

-

@cR0w attackers only need to succeed once. Defenders need to succeed every time.

When using a broad system with lots of capabilities, but also stochastic and unpredictable, guess for which side it's the most useful?

@loke Attackers only need to succeed once for initial access but defenders only need to be right once to mitigate after initial access. Those cute little bugs being found by the multi billion dollar AI systems do not imply any legitimate offensive capabilities.

-

@cR0w where does "execs using AI" rank in this?

@nyanbinary Tippy top. Highest risk to the org.

-

This post did not contain any content.

@cR0w Blue Team practically working for Red Team.

-

This post did not contain any content.

@cR0w I actually disagree on this one, with a caveat.

If the AI is only allowed to block, not allow, and is part of a layered system that includes traditional safeties, then there is no practical harm in adding AI to a toolset (AI is bad morally, but that's not my point here).

Machine learning has been used to detect IoC's for a while now, I know SentinelOne was announcing that capability around 2019 (the MSP I worked for used them so I got their newsletter).

1/2

-

This post did not contain any content.

@cR0w

1000%. AI favors the defenders my moopsy robot ass. For red teaming just kinda working is good enough blue team doesn't have those luxuries. And that's not even including the attack surface of AI itself -

@cR0w I actually disagree on this one, with a caveat.

If the AI is only allowed to block, not allow, and is part of a layered system that includes traditional safeties, then there is no practical harm in adding AI to a toolset (AI is bad morally, but that's not my point here).

Machine learning has been used to detect IoC's for a while now, I know SentinelOne was announcing that capability around 2019 (the MSP I worked for used them so I got their newsletter).

1/2

@cR0w this also doesn't consider user feelings, because false positives are definitely more likely if using an AI or machine learning element, but I tend to err on the side of false positives fine, false negatives bad no matter the impact.

Again, this is not apologetics for how garbage and damaging AI companies are, because they are very much both of those things, but from a pure performance and security standpoint, structured, layered use of AI to detect and block intrusions can work fine.

-

@cR0w I actually disagree on this one, with a caveat.

If the AI is only allowed to block, not allow, and is part of a layered system that includes traditional safeties, then there is no practical harm in adding AI to a toolset (AI is bad morally, but that's not my point here).

Machine learning has been used to detect IoC's for a while now, I know SentinelOne was announcing that capability around 2019 (the MSP I worked for used them so I got their newsletter).

1/2

@DemonHouser If an AI system inadvertently blocks a critical system in my world, really bad things can happen. And if they do, who is accountable? A human making a human mistake is held accountable. An AI system making a "mistake" is just "lol, whoops, it's still learning" and no one is held accountable.

Also, I dislike how now that modern AI has been proven to be hot garbage people are using traditional ML as a counterpoint. They are not the same despite the overlap in their usage.

-

@beyondmachines1 AI tools used by attackers has not materially impacted capabilities beyond scope and scale, but that does not change the likelihood of occurrence or the severity of impact to orgs who were already modeling their risk based on the state of the art threats, which should be everyone at this point. Defenders relying on nondeterministic and unaccountable systems are inevitable going to miss things due to the way existing AI tools work.

@cR0w your argument assumes full discipline and coverage of the risk assessment.

Wildly optimistic, given that most breaches still boil down to basics like credentials, human factor and misconfigurations.

No horse in the AI race. Just saying the reality is far from "should be everyone at this point"

-

J jwcph@helvede.net shared this topic

J jwcph@helvede.net shared this topic