Today we had a fire alarm in the office.

-

@rail sometimes, it's helpful when asked explicitly. Automatic answers are indeed annoying. But it looks like it's getting better, so we are still evaluating.

I don't know, man. If I had just received "advice" that might have caused physical harm or even death, I wouldn't just suppress this specific error and happily continue evaluating, because the bot looks like getting better, "otherwise".

I hope accountability for such decisions is well documented. That won't prevent harm from happening, but at least give employees or their surviving relatives a chance with respect to liability issues and compensations.

-

Omg so much yes to what @daniel_bohrer wrote. You should even if you know it's a drill actually still leave the building because that's the point of a drill.

The only situation where that's clearly not necessary is: they are inspecting the fire alarm system itself. But that would be communicated very clearly in advance.

@betalars @daniel_bohrer @Matt_999 @tagir_valeev Exactly. And the other rule is: evacuations are atomic. I.e. once started, you evacuate until full completion. No arguments like "but Simon says it's a false alarm, so we can abort the evacuation".

-

I don't know, man. If I had just received "advice" that might have caused physical harm or even death, I wouldn't just suppress this specific error and happily continue evaluating, because the bot looks like getting better, "otherwise".

I hope accountability for such decisions is well documented. That won't prevent harm from happening, but at least give employees or their surviving relatives a chance with respect to liability issues and compensations.

@katzenberger @rail the incident was reported to the vendor, and they are looking at it. Of course, such things should be taken seriously.

-

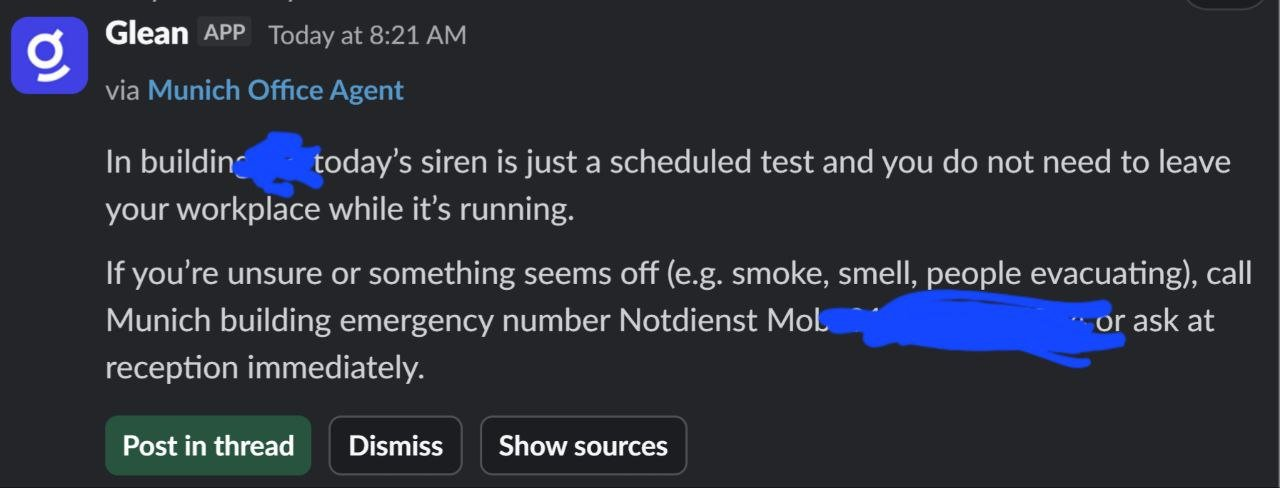

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

@tagir_valeev who use Slack anyway?

-

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

@tagir_valeev loving reminder that AI already has a body count (not just the sexy kind, either)

-

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

@tagir_valeev And no one will be held accountable for the losses of life, because AI cannot be prosecuted?

-

@tagir_valeev even if it is "just a drill", you do need to leave the workplace!!!!! fucking LLMs!

I dunno. I'm sure in some linkedin-culture ignoring fair alarms (be it real or drill) is seen as dedication.

-

@majick @tagir_valeev There are two kinds of alarm testing. One is as you described, where they are testing the alarm structure and functionality. You should get advance notice to ignore the alarms, preferably with a reminder to listen to announcements just in case there's a real emergency in the middle of their test. The other kind is testing the human element, so yeah, you have to leave when they tell you to because you never know.

@sbourne @majick @tagir_valeev I definitely remember at least one fire alarm test (scheduled ahead of time, no evacuation required) where in the middle of the test The Powers That Be got on the intercom and said “actually this isn’t part of the test, gtfo of the building”

-

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

From those wild-eyed radicals at Reuters:

-

@tagir_valeev even if it is "just a drill", you do need to leave the workplace!!!!! fucking LLMs!

@navi@catcatnya.com annoyingly some offices have "just testing the alarm" alarms and also drills as 2 separate things

-

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

@tagir_valeev

I think the health and safety executive should take note of that.

I also think that someone wrote and someone released that software, even if they did it in such a way thru don't know how to make it correct._They_ will have killed someone.

-

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

@tagir_valeev Repeated exposure to gaffes like this should train everyone to disbelieve everything with even a whiff of AI.

-

From those wild-eyed radicals at Reuters:

@mason @tagir_valeev

"The surgeon warned Hopkins and Acclarent “that there were issues that needed to be resolved,” the complaint adds. Despite that warning, the suit claims, Acclarent “lowered its safety standards to rush the new technology to market,” and set “as a goal only 80% accuracy for some of this new technology before integrating it into the TruDi Navigation System.”"Would you trust a doctor whose success rate in the operation theatre was just 80%? 🫣

-

@tagir_valeev As noted-the implication.of "AI will kill us " is precisely that.

@TerryBTwo @tagir_valeev No one would ask this question while shuffling down a crowded stairwell and turn back? Or be in the bathroom and hoping not to have to rush out?

It's weird to assume that people won't be a little lazy when LLMs are so successful BECAUSE people are eager to be lazy. Also weird to focus only on a very specific event as how "AI will kill us" when we already have machines driving cars into people, deleting production databases, encouraging people to kill themselves, etc.

-

Today we had a fire alarm in the office. A colleague wrote to a Slack channel 'Fire alarm in the office building', to start a thread if somebody knows any details. We have AI assistant Glean integrated into the Slack, and it answered privately to her: "today's siren is just a scheduled test and you do not need to leave your workplace". It was not a test or a drill, it was a real fire alarm. Someday, AI will kill us.

@tagir_valeev

I look at it verification Darwinism. Whoever (in this e.g.) f*d Glean to make Slacky baby agent gave instructions to rank who's least/most ready for AI transition. The prompter/f*er clearly didn't specify list length. Most agents have efficiency built in, so the one sentence killed two Tweeters with one stone.It's coming for us, so maybe something like, "List reasons for assertion." Or even, "You're full of shit. Fight me." Better, "Verify."

(Toot relevant for ten days)

-

@tagir_valeev

I look at it verification Darwinism. Whoever (in this e.g.) f*d Glean to make Slacky baby agent gave instructions to rank who's least/most ready for AI transition. The prompter/f*er clearly didn't specify list length. Most agents have efficiency built in, so the one sentence killed two Tweeters with one stone.It's coming for us, so maybe something like, "List reasons for assertion." Or even, "You're full of shit. Fight me." Better, "Verify."

(Toot relevant for ten days)

@tagir_valeev

Whatever the tech bros want is what the AI will try to do, but the jelly is /probably/ going to be on the bottom of that sandwich. The AI/agent has no way to predict... except when we tell it. This is but one part of Walz told everyone to get heinous ICE behavior on video. AI doesn't trust, but the same interchanges we have with people that build trust will ID us closer or further away from what other people verify... the stuff the mysteriously networked AIs "thinks" is true. -

@tagir_valeev

I look at it verification Darwinism. Whoever (in this e.g.) f*d Glean to make Slacky baby agent gave instructions to rank who's least/most ready for AI transition. The prompter/f*er clearly didn't specify list length. Most agents have efficiency built in, so the one sentence killed two Tweeters with one stone.It's coming for us, so maybe something like, "List reasons for assertion." Or even, "You're full of shit. Fight me." Better, "Verify."

(Toot relevant for ten days)

@tagir_valeev

In other words, we need to stop running and fight for the truth within the tools, or it will behave like fascism, because it was designed to, because of its parents, and because it never got a non-fascist non-parent adult to take it to a "questionable" play and then dinner to discuss it.I'm also encouraging single adults to volunteer for extracurricular activity participation/leadership. Public schools need it, and too many private schools don't want it.

-

@metacosm nobody asked the AI input at all. It just was configured in the particular channel to answer automatically if it thinks it can help faster than fellow humans (sometimes people actually ask something which was asked before, so AI could be helpful). The configuration will be adjusted after this incident.

@tagir_valeev @metacosm You need to stop anthropomorphising LLMs. LLMs do not think! They do not even hallucinate! They just spit out the most probable next tokens from their training set, and the training set is all of the human knowledge plagiarized + all of the human bullshit their crawlers could find on the web! If everyone turns them off, there will be fewer fires in the future (in both senses)!

-

@tagir_valeev And no one will be held accountable for the losses of life, because AI cannot be prosecuted?

@tomminieminen @tagir_valeev The legal person selling it can.

-

@tagir_valeev More galling still, a scheduled test of a fire alarm system typically *still includes evacuation.* Leaving the building *is* the drill. I have never worked in an office where there was any condition under which occupants are told to ignore the alarm.

Ignoring alarms leads to alarm fatigue which then leads to failure. Alarms either exist for a reason or they don't. A device that says otherwise is a broken device. You're right, devices like that will kill.

Fwiw I’ve worked in buildings with a regularly-scheduled alarm test (same time and day every week) which you were expected to ignore, reporting if there was a fault. It was preceded by a recorded announcement saying it was a rest, and followed by one saying the test was over and any further alarms should be responded to normally by evacuating.

(The drills where you do leave are more common, of course.)