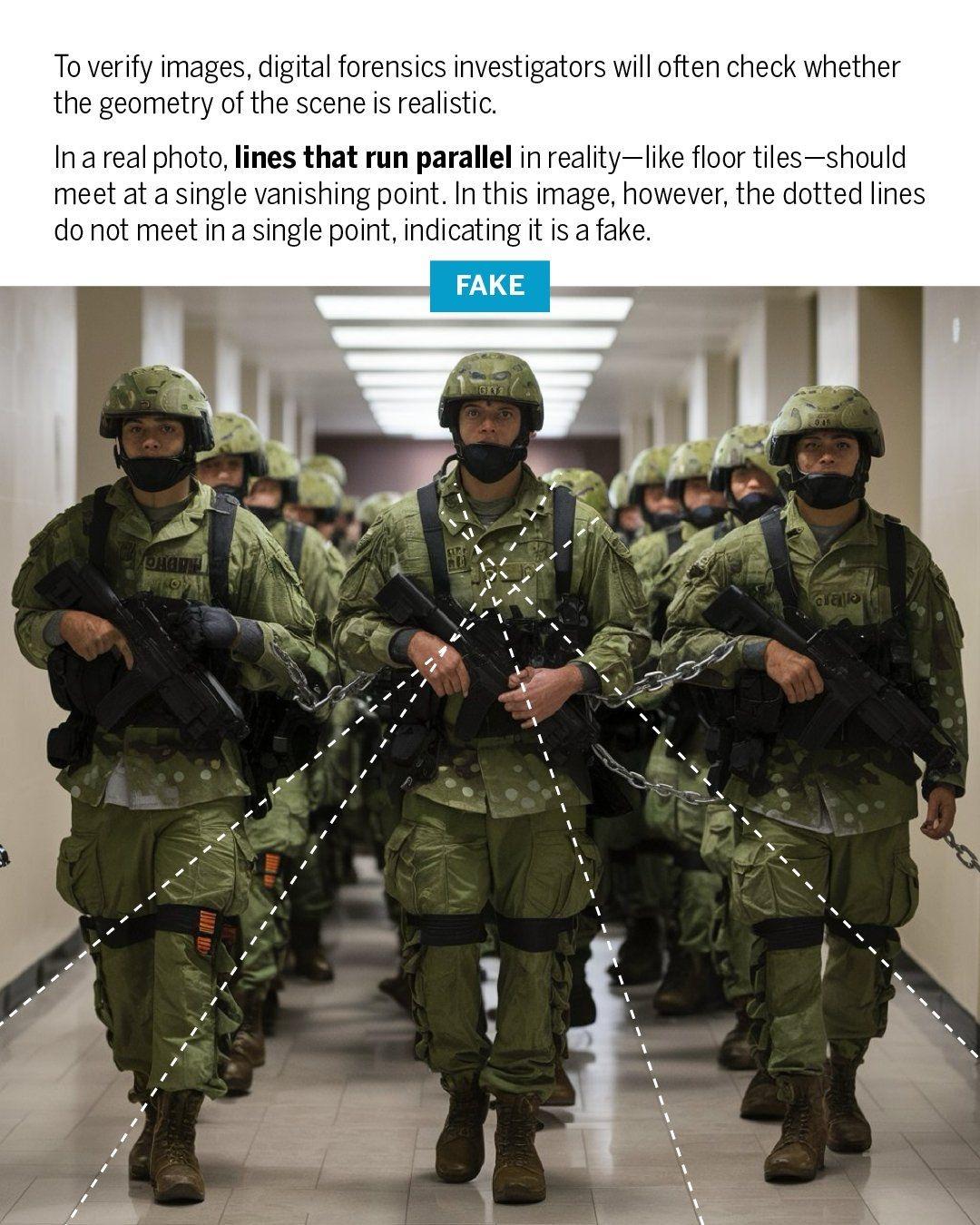

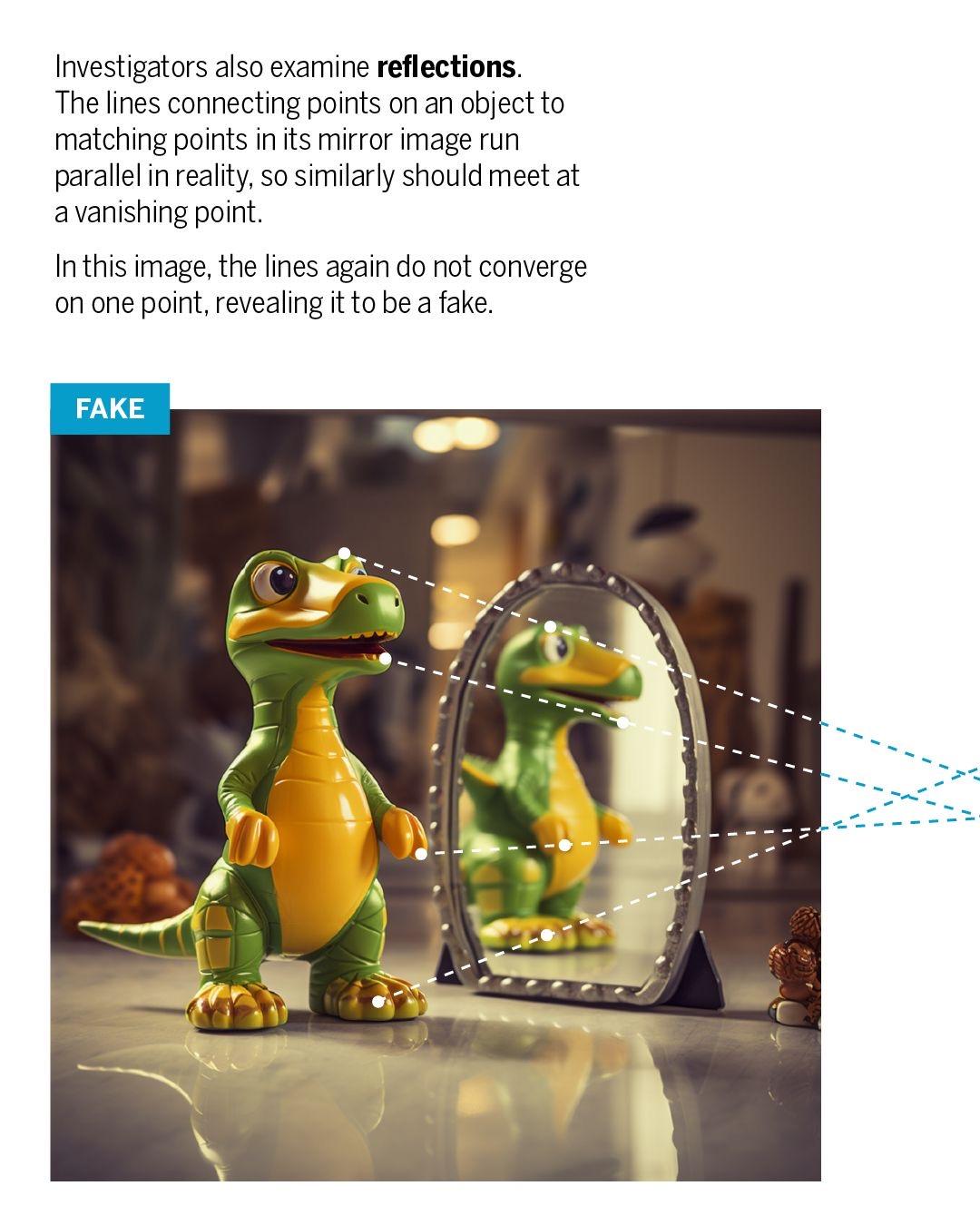

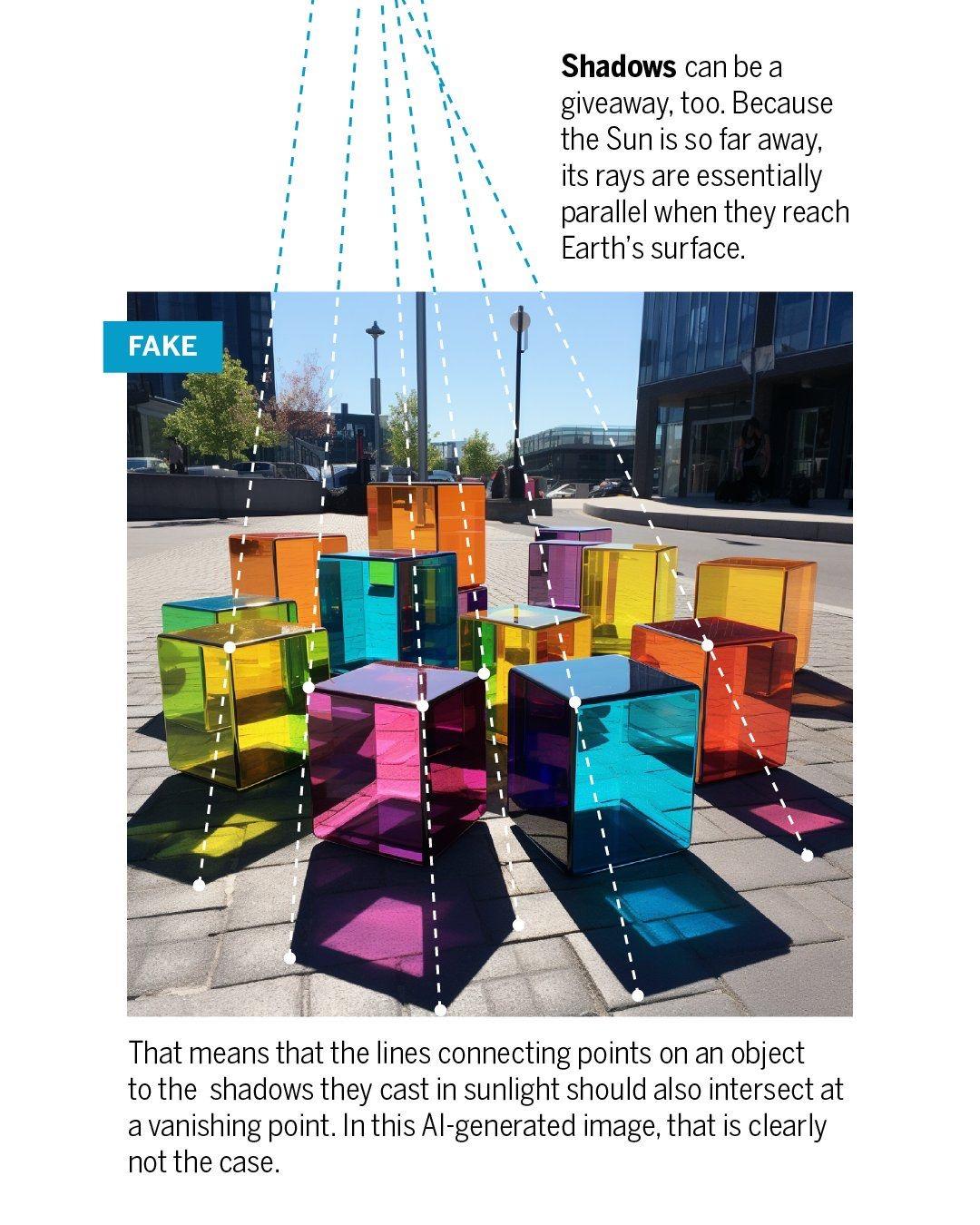

#Deepfakes are everywhere, but #DigitalForensics investigators are fighting back:

-

@aearo @FabMusacchio What's interesting to me is WHY AI generated images will maybe never get it right.

Put simply, the consumers of the AI generated images do not care whether or not all the lines properly converge onto a vanishing point. Human vision may care about weird extra fingers, but vanishing point convergence? Nope. Don't care.

Human viewers will never notice these perspective errors, so AI models have no incentive to fix them.

That, but I also think it's a really hard, abstract thing to train the models on regardless.

I could be wrong about this! Maybe it's easier than I think. But it's not like you can just say to the model "oh yeah, and make sure all the edges of things follow the rules of perspective." It has to learn those rules the same way it learns everything else - basically, by looking at a bunch of examples and getting a "feel" for what's right. (Well, "a feel" = "the values of the model's weights updated to produce this result" and so forth, but yunno.)

But it's not the kind of detail that immediately jumps out, as long as it's not *too* wrong. Observing it requires both figuring out which lines are relevant, and knowing how those lines should behave, and image-gen AI has no special ability to do either of those things. It has no ability to follow rules precisely.

The fact that human brains can also look at the pictures and not immediately go "wait, that's wrong" gives me confidence that AI models won't get it either. Even humans generally need to get out a ruler and start measuring. I think it's hard for human brains to just see it for pretty much the same reason it's hard for AI, but until AGI is a thing, strategies like "know the rules concretely" and "draw a line with a ruler" are more or less out of reach for the AI.

-

P pelle@veganism.social shared this topic

P pelle@veganism.social shared this topic