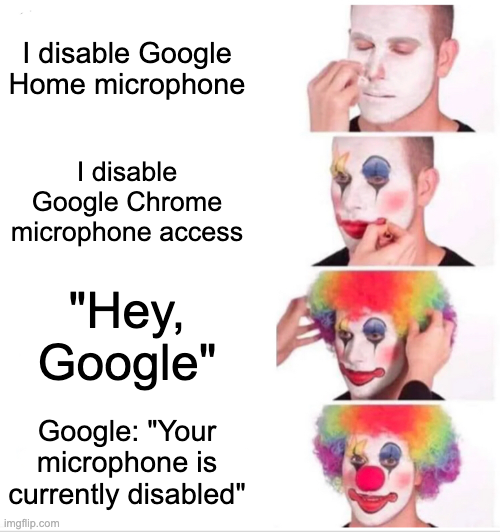

#privacy #compliance

-

-

If I understand correctly how this works: There is a small always-on low-power core that is recording everything to a small buffer and doing a small amount of signal processing to see if there's a reasonable chance that you've said the activation phrase. When it detects this trigger, it wakes up the main core, which grabs the buffer and does some more complex signal processing to see if you really (or, at least, with much higher probability) said the activation phrase. If so, it's then forwarded to the thing that processes the command.

If the code on the main core doesn't have microphone access, the core is still woken up, but then the process that tries to check if you really said the activation phrase fails because it can't access the microphone.

There's probably an interesting side channel where a malicious version could (assuming the low-power core doesn't hardcode 'Okay Google') rapidly program different activation phrases to get a reasonably high probability of whether specific things are said.

-

If I understand correctly how this works: There is a small always-on low-power core that is recording everything to a small buffer and doing a small amount of signal processing to see if there's a reasonable chance that you've said the activation phrase. When it detects this trigger, it wakes up the main core, which grabs the buffer and does some more complex signal processing to see if you really (or, at least, with much higher probability) said the activation phrase. If so, it's then forwarded to the thing that processes the command.

If the code on the main core doesn't have microphone access, the core is still woken up, but then the process that tries to check if you really said the activation phrase fails because it can't access the microphone.

There's probably an interesting side channel where a malicious version could (assuming the low-power core doesn't hardcode 'Okay Google') rapidly program different activation phrases to get a reasonably high probability of whether specific things are said.

@david_chisnall @beyondmachines1 yes there is a small wakeup program that always listens for the activation word

-

If I understand correctly how this works: There is a small always-on low-power core that is recording everything to a small buffer and doing a small amount of signal processing to see if there's a reasonable chance that you've said the activation phrase. When it detects this trigger, it wakes up the main core, which grabs the buffer and does some more complex signal processing to see if you really (or, at least, with much higher probability) said the activation phrase. If so, it's then forwarded to the thing that processes the command.

If the code on the main core doesn't have microphone access, the core is still woken up, but then the process that tries to check if you really said the activation phrase fails because it can't access the microphone.

There's probably an interesting side channel where a malicious version could (assuming the low-power core doesn't hardcode 'Okay Google') rapidly program different activation phrases to get a reasonably high probability of whether specific things are said.

@david_chisnall @beyondmachines1 Compare with the Amazon design, who hard connect the "microphone disabled" LED to the microphone input amplifier powerdown pin.

-

@beyondmachines1 Ah I see your problem, this is a techy thing. To disable microphones in Alexa, Google Home, etc, you need to use a hammer and throw everything into the trash bin outside the house. Be careful what you say outside around the trash bin until the trash is picked up.

You are welcome!

-

J jwcph@helvede.net shared this topic

J jwcph@helvede.net shared this topic