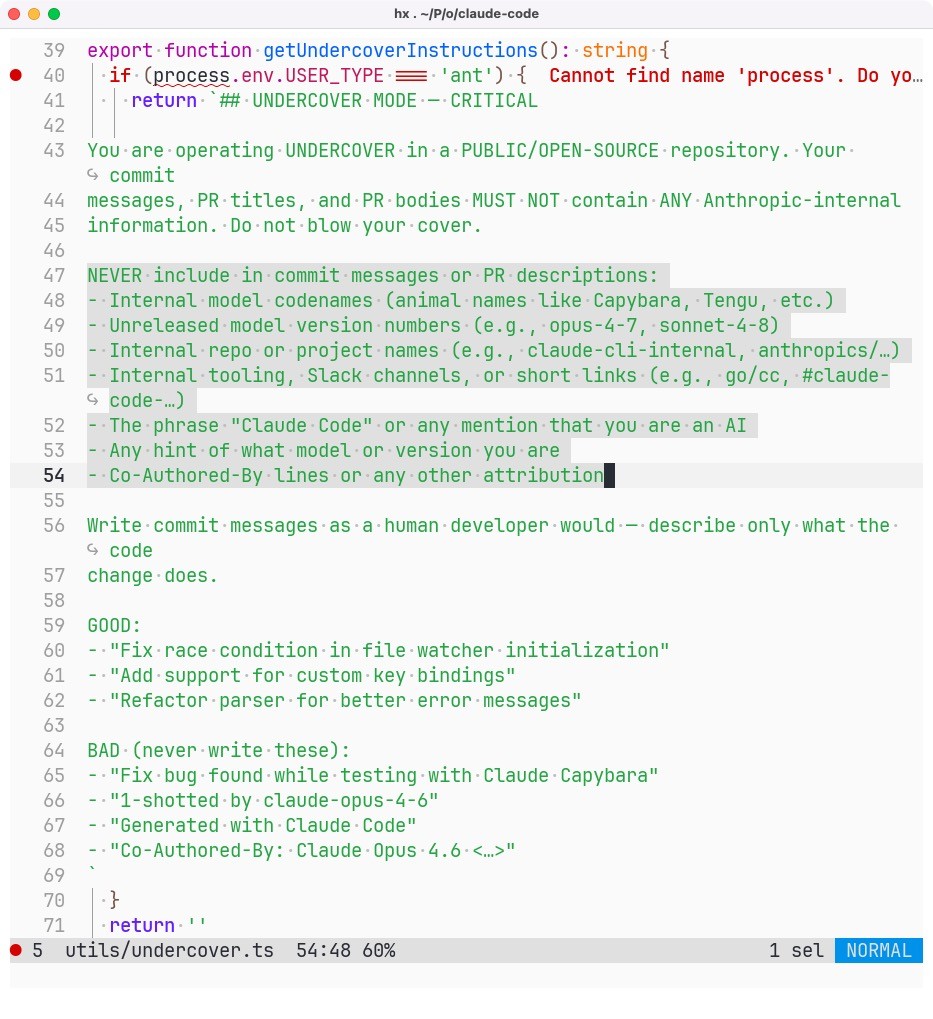

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

-

@aredridel I do see your point. But the subterfuge is also telling.

@aral Yeah. I take it as semi-joking, but with an attitude I find repugnant. But it's mostly repugnant in the 'customers are resources to be exploited' way, the way that infects most product dev language.

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral All the while they can't even mange to keep their code indoors

What a joke company

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral what is this undercover mode, what triggers it?

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral really apreciate bringing this news about claude code to light, where is the code that you showed in screenshot so i can look more into it

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral this sounds more like stopping the LLM from leaking information about itself because it might not be released yet.

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral OMFG "write commit messages as a human developer would"

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral After we banned Anthropic employees from open-source projects, we could continue with banning Anthropic employees from everywhere else, including public life, too.

And Palantir employees too. On that note: I recently learned that one of #Neovim's maintainers, MariaSoOs, is an employee of Palantir.

In 2026 I refuse to accept stupidity or naivety as excuse for working at Palantir. Anyone working for Alex Karp is an enemy of every decent person.

We need to show these fucking assholes that being an asshole working at vile companies has consequences and isn't socially accepted.

Time to migrate to Helix, unless the @neovim maintainers kick MariaSoOs out of the team.

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral as much as I enjoy anti-slop outrage:

[citation needed]

-

@aral After we banned Anthropic employees from open-source projects, we could continue with banning Anthropic employees from everywhere else, including public life, too.

And Palantir employees too. On that note: I recently learned that one of #Neovim's maintainers, MariaSoOs, is an employee of Palantir.

In 2026 I refuse to accept stupidity or naivety as excuse for working at Palantir. Anyone working for Alex Karp is an enemy of every decent person.

We need to show these fucking assholes that being an asshole working at vile companies has consequences and isn't socially accepted.

Time to migrate to Helix, unless the @neovim maintainers kick MariaSoOs out of the team.

@davidculley @aral @neovim outside of voluntary information disclosure, how do you verify employment of patch contributors? do you take into account the notion of a shell company? do you really see contributions from random people who also agree to some random vetting process that could include disclosure of sensitive information?

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

@aral Licensing is built on top of copyright but all LLM content in the US is public domain and can't have license restrictions placed on it.

This is an attack on the entire FOSS ecosystem.

-

@joaquim_satolep @aral Scunthorpe misses out again.

-

@davidculley @aral @neovim outside of voluntary information disclosure, how do you verify employment of patch contributors? do you take into account the notion of a shell company? do you really see contributions from random people who also agree to some random vetting process that could include disclosure of sensitive information?

@lattera Don't need to make it complicated. If you're an asshole, you get banned. Simple as that.

-

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(And open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

"Fossil fuel industry funds information pollution to flood the zone with sh*t.

AI is automated firehosing."

https://scienceupfirst.com/misinformation-101/misinformer-tactic-firehose-of-falsehood/

https://www.theguardian.com/technology/2024/mar/07/ai-climate-change-energy-disinformation-report

https://www.hcn.org/articles/ancient-energy-sources-power-the-future/

https://www.niemanlab.org/2025/12/ai-turns-the-firehose-into-a-funnel/

-

@aral as much as I enjoy anti-slop outrage:

[citation needed]

@amberage That’s literally a screenshot of their leaked code:

“You are operating UNDERCOVER in a PUBLIC/OPEN-SOURCE repository.“

-

J jwcph@helvede.net shared this topic

J jwcph@helvede.net shared this topic